Researching your Practice

Once you have identified your research topic and refined your research question, the next stage is to decide on your design and methodology – what and how information you will collect to answer your question. Once you have decided on these, a plan with SMART goals and a realistic timetable is necessary to ensure successful completion.

Research Design

A key component of your question in determining the appropriate design is whether or not you want your results to be ‘generalisable’ – i.e. to apply to all similar research participants now and in the future; or are happy to find out what applies only to the people who actually participated in your project – a ‘snapshot in time’? The former will require statistical input to identify the sample size sufficient to answer your question; and the method of selection from all the possible participants in who you are interested (the sample) – the method of randomisation. The latter is much more straightforward – the people in the organisation or setting of interest who you can approach and who agree to participate.

The nature of the design and methodology will be determined primarily by your research question, but may also be influenced by time and resources available to you. You might ideally wish to generate results which can be generalised, but in the real world time, expertise and other resources may lead you towards a snapshot approach.

For the purpose of this blog, I am concentrating on research which looks at effectiveness – particularly the effectiveness (or otherwise) of coaching and mentoring. This could focus on the impact on an organisation; a single individual; or a number of individuals. Examples of research methods which look at effectiveness include experimental, observational, surveys, case studies, qualitative research.

Experimental research requires that the ‘research subjects’ or participants receive an intervention which is expected to have an impact on them and/or their organisation; measurements are taken before and after the intervention; and the researcher can conclude whether the intervention ‘makes a difference’ to the things which are being measured. Examples of interventions include executive coaching with Board level directors; performance coaching with middle managers; mentoring with young people.

The ‘gold standard’ for identifying whether an intervention achieves its desired outcome is the ‘randomised controlled trial’ (RCT) (as used in trials of clinical interventions such as new medicines). Briefly, this method involves at least two groups (those receiving the intervention, the ‘experimental’ group’) and the ‘control’ group (who do not receive the experimental intervention). ‘Before’ and ‘after’ measurements from each group are compared. The two groups are matched for key indicators – e.g. age, gender, level of seniority in the organisation. The total numbers and the numbers in each group are determined by a statistical process, the power calculation. Research participants are allocated to each group by a process of randomisation, which means that each individual has an equal chance of being allocated to either group. The processes of the power calculation and randomisation improve the likelihood that the results are genuine and can be generalised or happened by chance and only apply to the individuals included.

RCTs clearly involve complex, expensive, and time consuming processes. Whilst new medications cannot legally be brought to market without proven safety and effectiveness, other types of intervention can be evaluated by less intensive methods – although the results will not be as robust. A more readily used ‘before’ and ‘after’ comparison, either without a control group or with an unmatched control group would be the cohort study – when a group of people are assessed before the intervention – e.g. a programme of executive coaching – and again after the intervention has been completed. If used with a single organisation this approach could be described as a case study, although a full case study would also involve analysis of the organisational context as well as the impact of the coaching programme. If involving a single individual this would also be a case study, but would not give any information beyond the individual concerned.

Research Methods

When looking at impact or effectiveness of coaching and mentoring, organisational and individual indicators can be used – for example the impact of management coaching on sickness absence rates and/or on retention and turnover figures; or the reported well-being and motivation of coachees before and after the programme. This approach (using both quantitative and qualitative methods) is described as a ‘multi-method’ study, rather obviously because – it involves more than one method. The quantitative information includes those mentioned above – absence rates, turnover, or productivity, which organisations will be collecting routinely. Information about individuals will probably need to be collected specially by the researcher. This could be achieved through questionnaires, individual interviews or focus groups. Questionnaires may be used to collect quantitative information (e.g. age, gender, ethnicity; or answers to ‘multiple choice’ questions) about participants and qualitative information with their attitudes and behaviour in respect of either giving or receiving the coaching.

So, what do I mean by ‘quantitative’ information and ‘qualitative’ information? Fundamentally, the former is expressed in numbers; and the latter in language. Quantitative information is expressed through numbers – it may be rates, inputs such as hours worked or outputs such as the number of goods produced or services completed; or numbers may be allocated to linguistic answers (e.g. Male = 1; Female = 2; LGBTI = 3). Qualitative methods are especially useful for researching people’s feelings and thoughts about processes and allow you to probe more deeply into matters which could be quite sensitive. Some examples of the main methods for collecting information are provided below, with some of the strengths and weaknesses identified.

1 Questionnaire surveys – these are usually self-completed without you being present to offer clarification to the respondent. These may be administered as paper documents or, more commonly now, online. The benefits of questionnaires include the fact that they relatively inexpensive and easy to send out. Weaknesses include the need to phrase questions so that there is absolutely no ambiguity about precisely what information is being requested and encouraging people to complete and return the questions. Ease of analysis will depend on how well the questions are phrased and whether the answers requested are numerical or linguistic. A higher number of participants can be approached for the same resource input as interviews or focus groups.

- Organisational information such as sickness absence rates and staff turnover are usually collected routinely – as a researcher you will need to be able to access this information. Some thought should be given to how this information will be analysed.

- Semi-structured and structured interviews are often used to collect ‘feelings’ information from people, for example about how they experienced the process of coaching or being coached. These have the advantage of the researcher being present so that the interviewee(s) can seek clarification and the researcher can probe more deeply into the answers provided. If you are wanting to ask about more sensitive and personal issues (e.g. career plans) interviews will have an advantage over focus groups in which people may not be willing to divulge their personal plans and ambitions. These methods have the advantage of sharing some skills with coaching – e.g. building rapport, questioning and deep listening.

- Focus groups can be useful in that the opinions a larger number of people can be collected at one session; and members of the group may ‘spark’ ideas off each other. Using group discussion will require some additional skills to individual interviews – e.g facilitating the discussion, ensuring all members of the group have opportunity to contribute, similar to team coaching.

There are some similarities in the skills required for research and for coaching, although the purpose is different – to collect information which will enable you to draw conclusions about the question you originally asked.

Research Planning

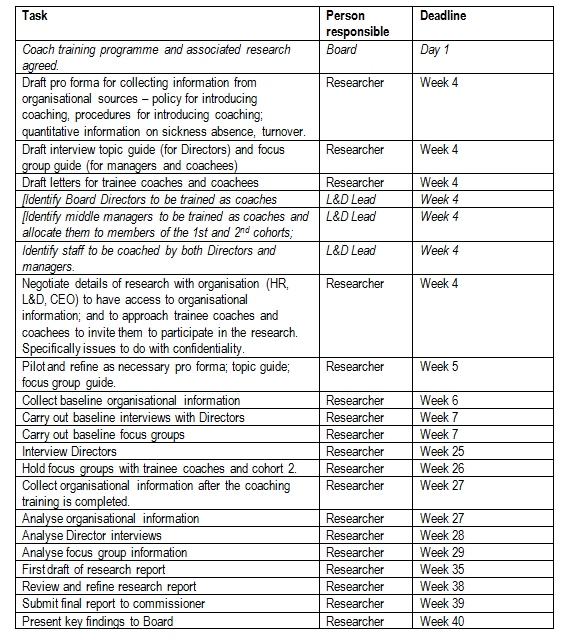

As with any complex set of activities, successful achievements depends on a clear and realistic plan. The precise details will of course depend on the specific details of the research project. The following example of a plan is based on a case study approach to a research project which will evaluate the effectiveness of introducing a programme of coaching into an organisation. The organisational plan is to provide Executive Coaching training to Board directors; and Performance Coaching to senior and middle managers. The middle managers will be trained in two consecutive cohorts – the cohort to be trained first will be the ‘experimental’ group and the second will the ‘control group’. Both groups will be asked about their motivation and commitment to the organisation and their engagement. The research has been commission by the L&D Lead and will be carried out by an external researcher.

This plan includes activities which need to be carried out by other parties, in italics. The purpose of this is to highlight the dependence of researchers on others. It may take longer for the organisation to complete its tasks; and there may be reasons (outside the researcher’s control) why the timescale may drift – it is essential to be prepared for this and to be realistic in your timescale and to build in some ‘slack’ so that the eventuality that there are delays it remains likely that you will complete the project on time.

My next blog will look at issues of data collection and analysis.